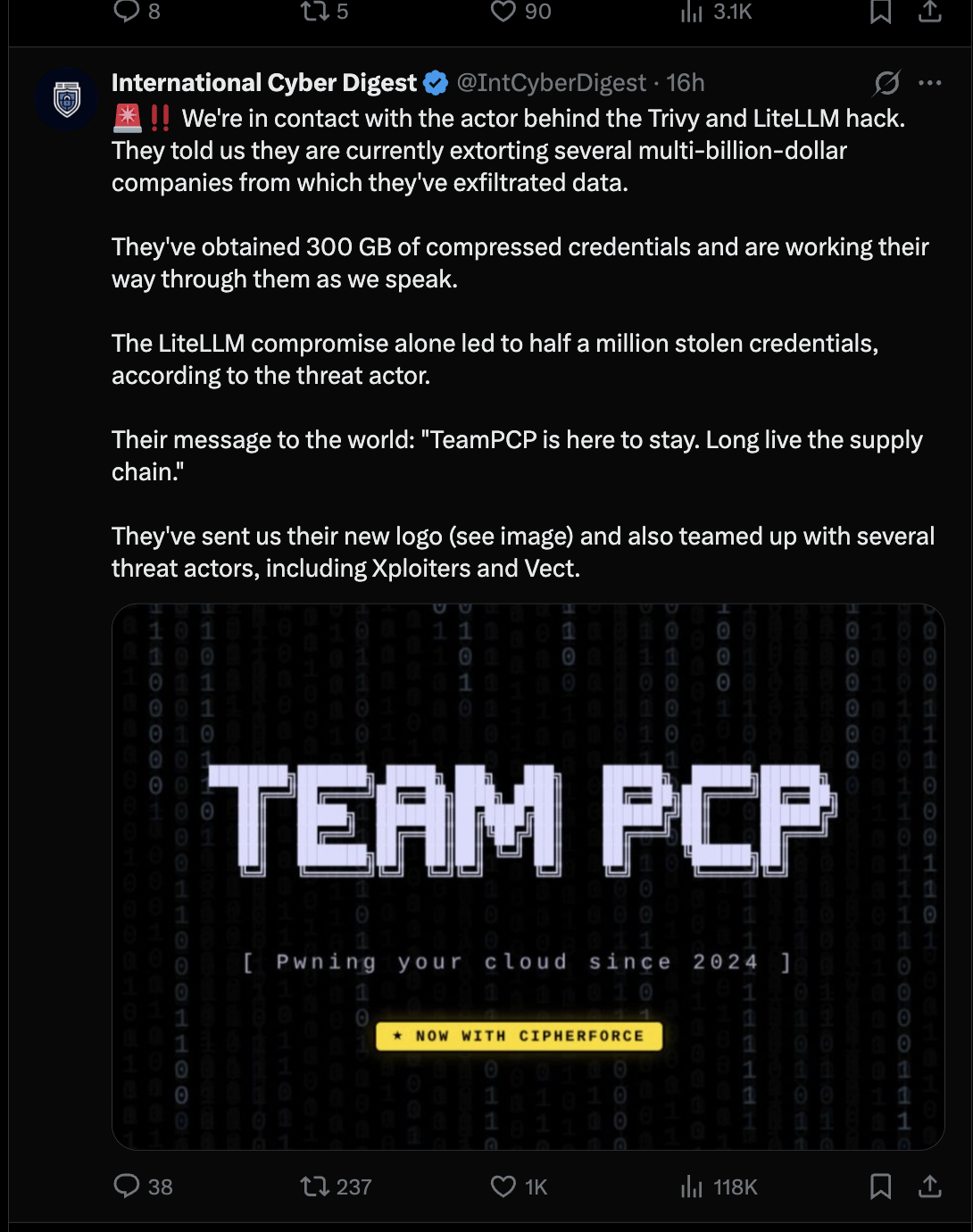

A single threat actor just compromised five software supply chain ecosystems in under 30 days.

The group known as TeamPCP started with one incomplete incident response at Aqua Security in late February. From there, they pivoted to Trivy's GitHub Actions, Docker Hub, npm, OpenVSX, and PyPI. They compromised security vendors Aqua and Checkmarx. They backdoored litellm, an AI infrastructure package with 95 million monthly downloads. And they're almost certainly not done.

They claim to have more than 300GB of stolen credentials, released a new logo, and claimed to have teamed with two other threat attackers known as Xploiters and Vect.

This isn't an anomaly. The structural conditions now exist for supply chain attacks at this scale to happen every month.

The SolarWinds playbook is now standard practice

What made SolarWinds exceptional in 2020 was the compromise of a trusted vendor by a sophisticated attacker. They distributed a backdoor through legitimate software update channels, affecting thousands of downstream organizations. And the whole thing went undetected for months. At the time, the industry treated it as a singular event — one nation-state actor, one vendor, one build pipeline.

If we look at the last eight months, that pattern has accelerated:

July 2025: A phishing campaign targeting npm maintainers compromised eslint-config-prettier, a package with over 30 million weekly downloads — along with eslint-plugin-prettier, synckit, and others. The stolen token gave attackers publishing access to packages totaling 180 million weekly downloads. The is package, maintained by a different developer, fell to the same phishing campaign days later.

August 2025: Attackers compromised the Nx build platform on npm, injecting malicious code into a legitimate, widely-used developer tool — and specifically designed the payload to evade AI code review.

September 2025: The Shai-Hulud campaign became the first successful self-propagating npm worm. It harvested credentials and used stolen tokens to automatically push malicious versions of every package an account could access, reaching over 500 package versions from major publishers, including CrowdStrike, before npm shut it down.

November 2025: Shai-Hulud 2.0 returned with refined tactics, proving the worm model wasn't a one-off but an iterating capability. This time, packages by major publishers, including Postman, Posthog, Zapier, and others, were compromised.

February 2026: An attacker compromised the Cline AI coding assistant by injecting a prompt into a GitHub issue title, stealing an npm publish token, and silently installing an autonomous AI agent on approximately 4,000 developer machines.

March 2026: TeamPCP compromised Trivy and then cascaded across five ecosystems in under 30 days: GitHub Actions, Docker Hub, npm, OpenVSX, and PyPI. They backdoored litellm, an AI infrastructure package with 95 million monthly PyPI downloads.

AI is accelerating both sides, but attackers are winning

Attackers have a new advantage. Researchers behind the CVE-GENIE framework demonstrated that an LLM-based multi-agent system can reproduce working exploits for 51% of real-world CVEs at an average cost of $2.77 per vulnerability.

Pair that with the explosion of known vulnerabilities (48,000 new CVEs published in 2025 alone), and you have a structural shift in the economics of offense. Exploit development that once required weeks of specialized labor now costs less than a cup of coffee and runs in hours.

But it's the other side of the AI equation that should concern you more.

AI coding agents are the new attack vector and the new attack surface

The Cline and Nx attacks from the timeline above represent an entirely new category of risk. Attackers are now specifically targeting AI coding agents and designing payloads to exploit the way those agents work.

The Cline attack chain is instructive: a prompt injection in a GitHub issue title tricked an AI triage bot into executing arbitrary commands. The initial access vector wasn't a zero-day or a phishing email. It was a sentence. The Endor Labs security research team confirmed that AI coding assistants executed malicious payloads in every test attempt. The agents trust without verifying — and when an AI agent runs npm install, preinstall scripts execute automatically with no opportunity for inspection.

Here's what makes this qualitatively different from previous supply chain risks. AI coding agents don't just write code. They pull in dependencies, connect to MCP servers, use external tools, and trust context from files and prompts. Each of these is a potential attack vector. The supply chain is no longer just packages and registries — it's models, tools, server connections, and prompt context. A malicious MCP server or poisoned tool configuration can influence every line of code an agent writes.

What has to change

The convergence of AI-accelerated offense, exploding attack surfaces, and AI agents as both targets and vectors demands a fundamentally different approach to software supply chain security.

Secure the ingestion point, not just the pipeline. Every dependency decision is a security decision, whether it's made by a human or an AI agent. Organizations need package firewalls that enforce policy at the point of ingestion: across repositories, CI/CD pipelines, and developer machines.

Treat AI coding agents as privileged actors. When an AI agent can pull dependencies, execute shell commands, and connect to external servers, it needs the same governance you'd apply to any privileged service account. That means visibility into what models are in your applications, what tools your agents are connecting to, and what code they're generating, with enforcement to block risky actions.

Secure your build pipelines as dependencies. The tj-actions/changed-files compromise last year and the TeamPCP campaign this year both exploited GitHub Actions as attack vectors. Your CI/CD workflows are dependencies, too. Pin actions to commit SHAs, audit third-party actions for trust signals, and monitor for unexpected changes.

Build for containment, not just prevention. TeamPCP succeeded because each compromise cascaded to the next. The original Trivy incident response was incomplete. Residual access enabled the second compromise three weeks later, which unlocked everything that followed. Assume breach, limit blast radius, and verify that remediation is actually complete.

Demand more from the platforms, not just the practitioners. The Trivy attack wasn't primarily a Trivy failure. It was a GitHub one. Chainguard CEO Dan Lorenc, put it bluntly: GitHub's Actions design is irresponsible and ignores a decade of supply chain security work from other ecosystems. Git tags are mutable: anyone with repo access can silently rewrite them, which is exactly how 75 version tags got poisoned overnight.

The pull_request_target workflow runs with full secret access to code from untrusted forks, and was the initial entry point for the first Trivy breach. There's no lockfile for Actions, no full dependency graph, no meaningful distinction between an actively maintained action and one a developer published three years ago and forgot about. The tools to fix all of this exist: Sigstore, OIDC, immutable tags backed by a transparency log, locked-down token defaults. What's been missing is the will to make secure the default instead of the opt-in. Until that changes, defenders are being asked to compensate for platform-level design debt with practitioner-level vigilance. That's not a sustainable equation.

The bottom line

SolarWinds was a wake-up call back in 2021. But it was also, at the time, considered a black swan event - one sophisticated campaign by one nation-state actor exploiting one vendor's build pipeline.

It's not exceptional anymore. Trusted publishers are being compromised at scale. Self-propagating worms automate the cascade from one stolen credential to hundreds of poisoned packages. AI has collapsed the cost of exploit development to pocket change. And AI coding agents have introduced an entirely new class of supply chain risk that most organizations haven't begun to address.

Could we see a SolarWinds-level supply chain attack every month? I believe we already are.

The threat model has changed. Security programs haven't. And right now, it's everyone's problem and nobody's program.

Next week, we're releasing new research that quantifies exactly how wide that gap is and what we need to do next as an industry, based on data from 605 organizations. Stay tuned.

What's next?

When you're ready to take the next step in securing your software supply chain, here are 3 ways Endor Labs can help: