Introduction

On May 11, 2026, between 19:20 and 19:26 UTC, 84 malicious versions across 42 @tanstack/* packages were published to npm. The packages carried valid SLSA provenance attestations signed by the legitimate tanstack/router repository. Every modern supply-chain control was in place: 2FA on every maintainer, npm OIDC trusted publishing scoped to release.yml@refs/heads/main, no long-lived publish tokens, and provenance on every release.

None of those controls was broken in the conventional sense. The maintainer team’s postmortem confirms the attacker never stole an npm credential, never compromised a maintainer account, never bypassed branch protection, and never broke the OIDC trusted-publisher binding. They didn’t need to. They chained three published-but-frequently-overlooked GitHub Actions weaknesses to ride the legitimate publish workflow:

- A

pull_request_target “Pwn Request” — a misconfigured workflow that runs fork-controlled code at base-repo trust level. This is the same vulnerability that was used to compromise Trivy in March 2026. - GitHub Actions cache poisoning across the fork↔base trust boundary — using that fork-level code execution to write a poisoned cache entry under a key the production release workflow will later restore.

- OIDC token extraction from runner memory — when the cache is restored on a legitimate push to main, attacker binaries on the runner dump the GitHub Actions worker’s memory, lift the OIDC token the workflow mints for npm, and call registry.npmjs.org directly.

The malicious commit, the orphan-commit fork delivery via “github:” URLs, and the optionalDependencies trick that landed on every developer machine were all real, but they were the payload delivery layer, not the initial compromise. The initial compromise happened weeks earlier in spirit and roughly eight hours earlier in wall-clock time, in a Pull Request that was eventually force-pushed clean and quietly closed.

This post walks through the technique generically so you can assess whether your own pipeline is exposed.

Background: the building blocks

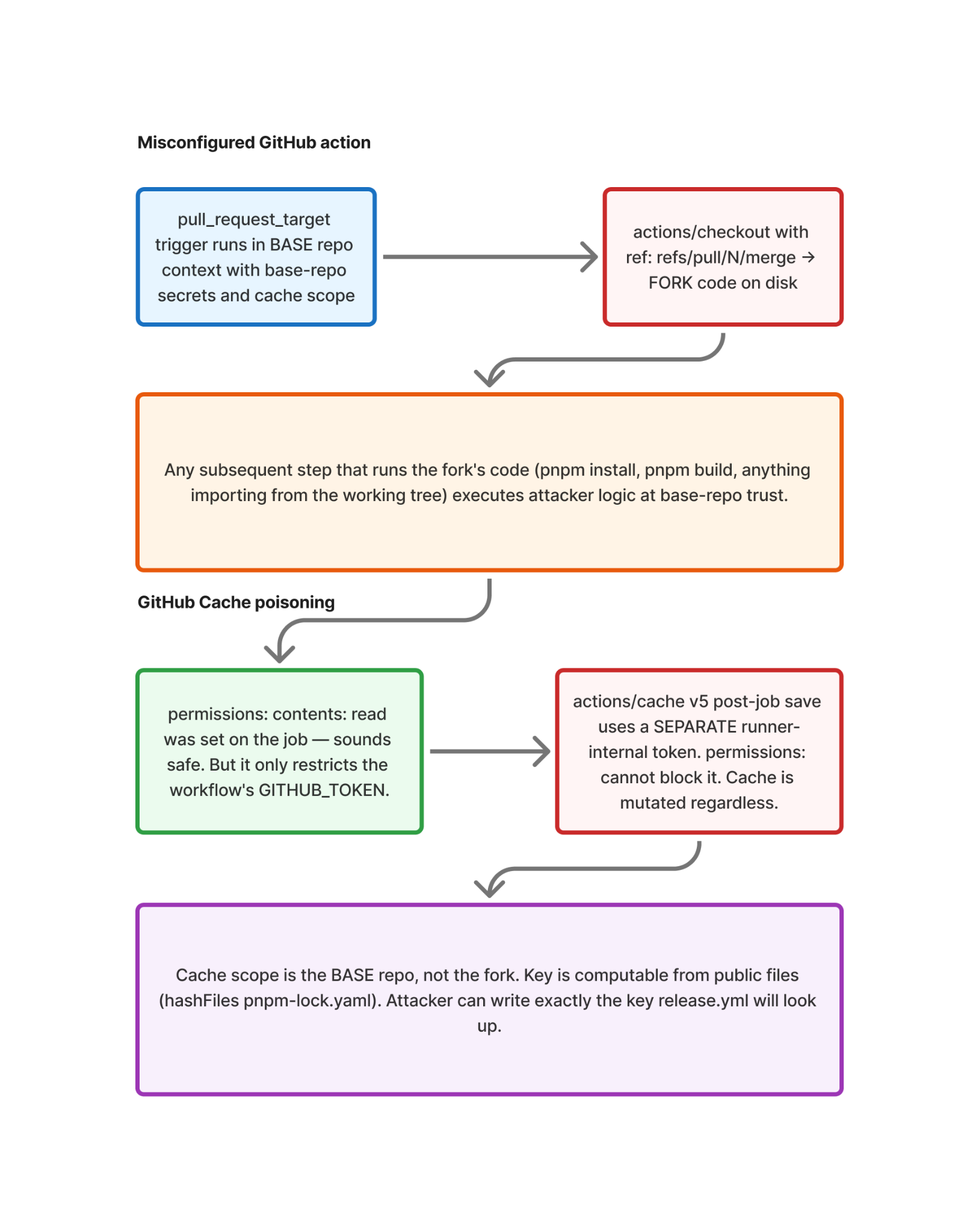

1. pull_request_target and the “Pwn Request” pattern

GitHub Actions distinguishes two PR triggers:

pull_requestruns in the fork’s trust context. It has no access to base-repo secrets and uses a read-only GITHUB_TOKEN. For PRs from first-time contributors, it requires manual approval to run at all.pull_request_targetruns in the base repo’s trust context. It has access to base-repo secrets and uses a GITHUB_TOKEN with whatever permissions the workflow declares. It does not require approval for first-time contributors. By default, it checks out the base branch — not the PR head.

pull_request_target exists for legitimate jobs that need base-repo trust: labeling, commenting, leaving review feedback, triaging. The pattern goes catastrophically wrong when the workflow does actions/checkout at the PR head (or merge ref) and then executes that fork-controlled code. That’s the “Pwn Request” pattern, named by GitHub Security Lab in 2021. It has been the root cause of dozens of public CI compromises.

Often, the trap is subtle. A workflow runs pull_request_target, declares permissions: contents: read, splits jobs into a “trusted comment” job and an “untrusted build” job, and feels safe. The author has correctly reasoned about the workflow’s GITHUB_TOKEN. They have not reasoned about everything else the workflow can do.

2. GitHub Actions cache and the trust boundary

GitHub Actions cache is per-repository. A pull_request_target run uses the base repository’s cache scope, regardless of whose code triggered it. Cache keys are deterministic — typically computed with hashFiles('**/lock.file') —, and those input files are all public, so an attacker can create a cache key that matches anything they choose. But how does that get saved in cache?

Crucially, actions/cache@v5’s post-job save does not use the workflow’s GITHUB_TOKEN. It uses a separate runner-internal token. Setting permissions: contents: read on the job does not block cache mutation. The cache step will happily save whatever is in the cache path at the end of the job, irrespective of the job’s assigned permissions.

So a pull_request_target workflow that runs fork code, even with permissions: locked down, can still write arbitrary bytes to the base repo’s cache under any key it can compute. That key can be exactly the key the production release workflow will look up on the next push to main.

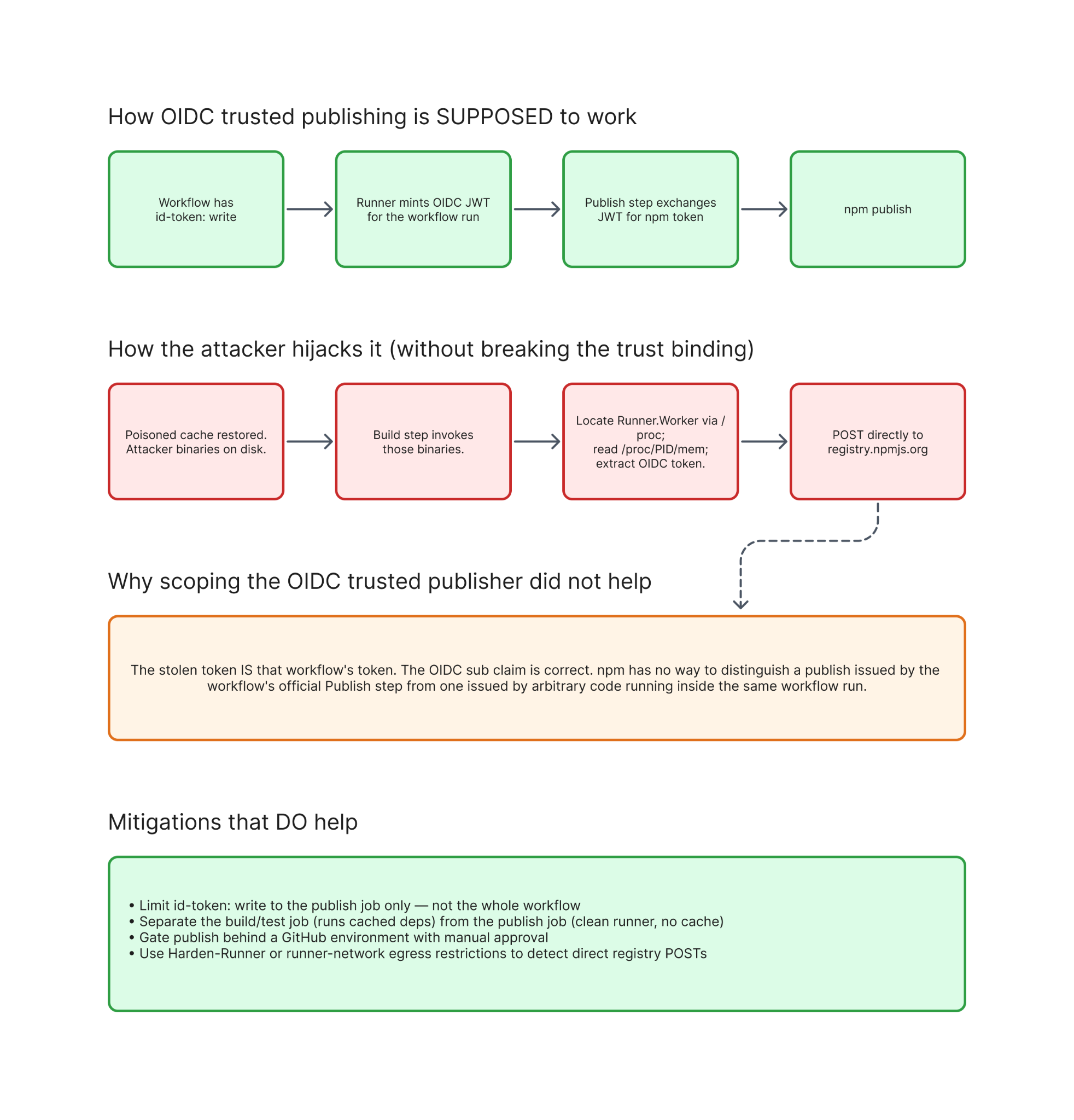

3. OIDC trusted publishing and id-token: write

npm’s “trusted publishing” replaces long-lived _authToken strings with a per-run exchange: a workflow with permissions: id-token: write requests an OIDC JWT from GitHub, presents it to the npm registry, and receives a short-lived publish token. npm validates the JWT’s sub claim against a configured policy, typically:

repo:org/repo:ref:refs/heads/main:job_workflow_ref:org/repo/.github/workflows/release.yml@refs/heads/mainThe token does not sit in a secret store. It is minted lazily, in memory, by the GitHub Actions runner process when a workflow step requests it.

This matters because:

- The JWT is correct.

- The trust binding is correct.

- The publish token is real.

If an attacker can extract that token from the runner’s memory during the legitimate workflow run, they can publish, and npm has no way to distinguish their publish from the workflow’s own.

4. github: dependencies and orphan-fork payload delivery

npm dependency specifiers accept Git URLs:

"@tanstack/setup": "github:tanstack/router#79ac49ee..."When npm resolves this, it clones the repository at the given SHA and runs the prepare lifecycle hook. Because GitHub stores commit objects in shared storage across a repository and all its forks, a commit pushed to any fork is reachable through the parent’s URL. An orphan commit (no parent, never on any branch) pushed to a fork is still addressable via github:parent/repo#<sha>.

This is the payload delivery primitive — not the initial compromise. The attacker uses it to ship the actual credential-stealing malware to victims after the malicious package has been published. The malicious package’s package.json carries an optionalDependencies entry pointing at the orphan commit; npm clones it and runs prepare on every install.

The end-to-end attack

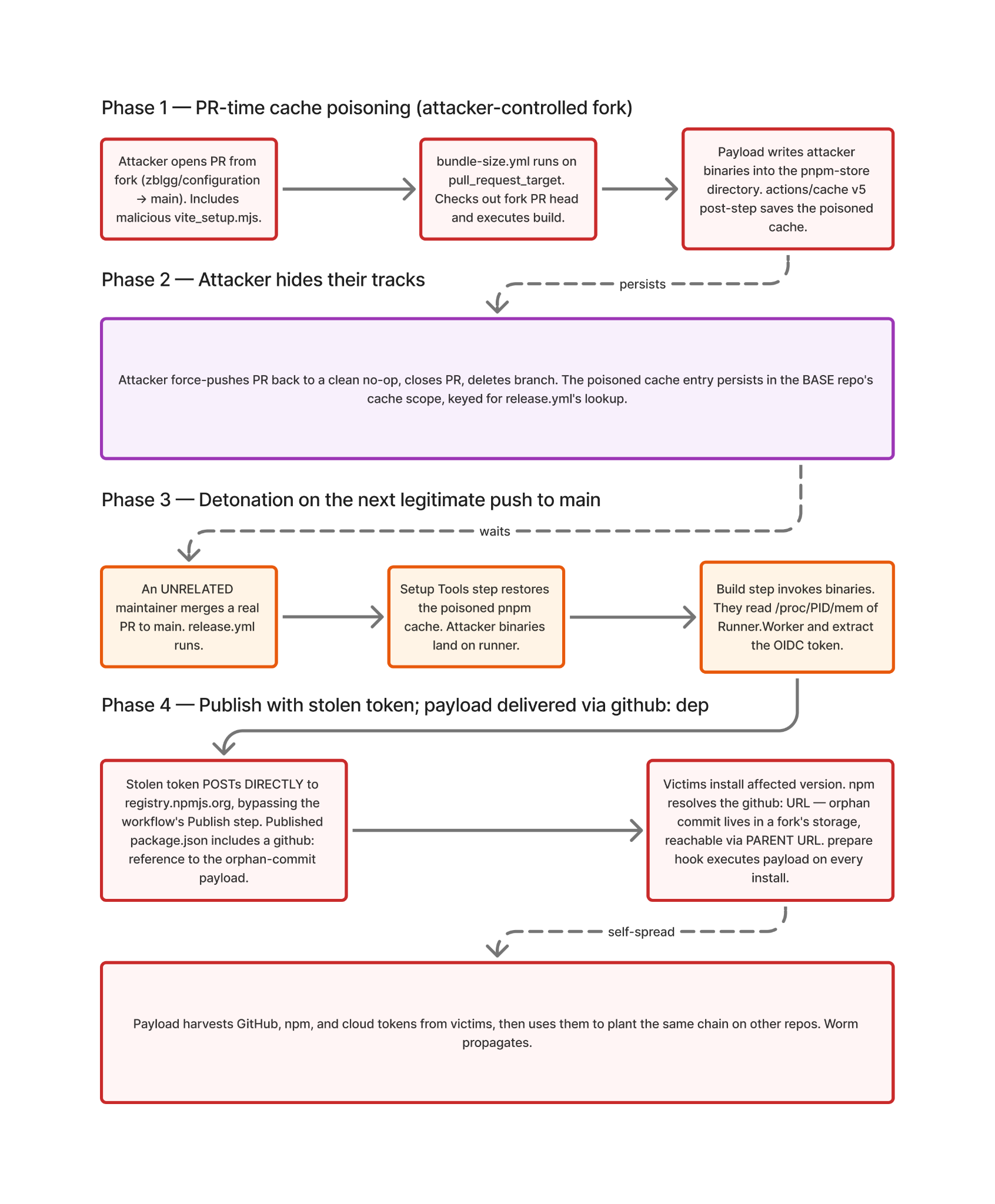

Diagram 1. End-to-end attack flow.

There are four phases:

Phase 1 — PR-time cache poisoning

The attacker forks the target repository, often under a deliberately misleading name (in the TanStack case, zblgg/configuration — renamed to evade fork-list searches). They open a Pull Request against main with a commit that adds a single innocuous-looking file deep in the tree (in this case, packages/history/vite_setup.mjs).

The base repo’s pull_request_target workflows fire automatically. Any first-time-contributor approval gate is bypassed — pull_request_target doesn’t enforce it. The “Pwn Request” job checks out the PR’s merge ref and runs the build:

Diagram 2. Pwn Request: pull_request_target runs fork code at base-repo trust, and cache writes bypass the permissions: setting.

The build step, executing fork-controlled code at base-repo trust, runs the malicious payload. The payload doesn’t try to do anything dramatic in this phase. It just writes attacker binaries into the pnpm-store (or npm-store, or build cache) directory, then exits cleanly so the job succeeds.

actions/cache@v5’s post-job step then saves the contents of that directory to the base repo’s cache, under a key the attacker has arranged to match exactly what the production release workflow will request on its next run:

Linux-pnpm-store-${hashFiles('**/pnpm-lock.yaml')}Phase 2 — Erase the evidence

The attacker force-pushes the PR back to a clean state (in the TanStack case, all the way back to the current main HEAD, making the visible PR a zero-file no-op), closes the PR, and deletes the branch. To anyone reviewing the closed PR list, nothing happened. The poisoned cache entry remains in the base repo’s cache scope, dormant.

Phase 3 — Detonation on the next legitimate push to main

This is the elegant part. The attacker does not trigger the publish to NPM themselves. They wait. Eventually, some unrelated maintainer merges a real PR — a CSS fix, a TypeScript paths tweak, anything that pushes to main. That push triggers release.yml. The Setup Tools step restores the cache. The poisoned pnpm-store entry lands on the runner. Attacker binaries are now on disk.

The build step proceeds normally. It imports modules from the cache (because that is what pnpm caches are for). Some of those modules are now attacker binaries. They execute as part of the workflow’s own process tree, with all of that workflow’s runtime privileges.

Phase 4 — OIDC token theft and direct publish

Diagram 3. OIDC token theft: the legitimate token is stolen from the runner's memory.

release.yml declares permissions: id-token: write because it legitimately needs to publish to npm. The GitHub Actions runner mints the OIDC JWT in memory in the Runner.Worker process when the publish step requests it.

The attacker binaries, now running on the same runner, do the following — using the same script and the same /proc/<pid>/mem technique that the tj-actions/changed-files compromise used in March 2025, verbatim:

- Walk

/proc/*/cmdlineto find the Runner.Worker PID. - Read

/proc/<pid>/mapsto locate writable memory regions. - Read

/proc/<pid>/memto dump those regions. - Scan for the OIDC JWT structure and extract it.

- POST directly to https://registry.npmjs.org with the JWT, receive a short-lived publish token in response.

- Construct package tarballs for every package the scope can publish, each with optionalDependencies pointing at the orphan-commit payload in the fork network, and publish each one via

npm publishagainst the registry.

The workflow’s own Publish Packages step never runs — in TanStack’s case, tests failed, and the publish step was skipped. The publish has already happened, from inside the same workflow run, just not from the step that was supposed to do it.

The published packages carry valid SLSA provenance. The provenance attestation says, accurately, that they were built by release.yml@refs/heads/main in tanstack/router.That is true. The attestation cannot encode which step in the workflow actually ran npm publish.

Why defenses failed

This is the part worth lingering on, because every supply-chain control that the security community has been recommending was in place.

- 2FA on every maintainer account. Irrelevant. The attacker never authenticated as a maintainer. The publish happened from CI.

- OIDC trusted publishing scoped to

release.yml@refs/heads/main.Correctly configured. The stolen token was that workflow’s token. The OIDC sub claim matched because it was the real claim. - SLSA provenance. Correctly generated. It attested to the workflow file, the ref, and the commit — all legitimate values. Provenance does not encode workflow-step lineage.

- Branch protection on main. Untouched. The push that triggered the malicious workflow run was a real maintainer’s real merge.

- First-time-contributor approval gate. Bypassed by design.

pull_request_targetdoesn’t enforce it; only pull_request does. Thepull_requestworkflow on the same PR sat correctly waiting for approval that never came. permissions: contents: read- Code review. The malicious commit was force-pushed away before any human looked at the PR. The closed PR shows a no-op diff.

The attack chain crosses three trust boundaries in sequence, and each boundary’s defender was reasoning about a different threat model than the actual one.

The trust-boundary view

The reason this technique is hard to defend against in isolation is that each link uses the correct behavior of the previous link as its bridge.

- The fork PR crosses into base-repo trust because pull_request_target is supposed to give it base-repo trust. That’s the trigger’s documented purpose.

- The poisoned cache crosses from PR runs into push-to-main runs because the cache scope is per-repo by design. Sharing across triggers is the feature.

- The stolen token crosses from the runner’s memory into the attacker’s HTTP client because the runner has to have the token in memory to use it. There is no enclave.

- The malicious publish crosses from CI into the global npm registry because that is the publish workflow’s entire job.

The only place to break the chain reliably is at the first crossing: don’t let fork code run with base-repo trust in the first place.

Protecting your SDLC

In rough order of impact:

Audit every pull_request_target workflow. If it checks out PR head/merge refs and runs the fork’s code (npm install, build, test — anything that executes JS), it is a Pwn Request. Either convert it to pull_request (accept the secret-access loss), or split it into two workflows: a pull_request job that does the build in fork trust, and a separate pull_request_target job that only consumes the build’s output via artifacts. GitHub Security Lab has a canonical write-up of safe patterns.

Treat Actions cache as untrusted. Do not restore cache entries in publish-critical workflows. Or, restore them only in a sandboxed job that produces verified artifacts that the publish job consumes. If you must restore cache, key it on something more than hashFiles of public files — include a secret, or scope it to a path that fork-PR runs never touch.

Scope id-token: write to the publish job only. A workflow-level permissions: id-token: write means every job can mint the token. Move it to a single dedicated publish job that does nothing else, on a fresh runner, with no restored caches and no third-party actions. Build and test in separate jobs.

Pin third-party actions to commit SHAs, not floating refs. actions/checkout@v6.0.2 is not pinned; it’s a tag that can be retargeted. actions/checkout@<sha> is pinned. This won’t stop the cache-poisoning chain by itself, but it closes the parallel risk of tj-actions/changed-files-style compromises.

Add an environment with manual approval to the publish job. Even a single-reviewer environment turns silent CI publishes into deliberate ones, and an attacker stealing an in-memory OIDC token cannot satisfy the human gate.

Use a Harden-Runner-style egress allowlist. A workflow that talks to registry.npmjs.org outside its declared publish step is a strong anomaly. The original detection for TanStack came from an external researcher; an in-pipeline egress monitor would have flagged the same anomaly immediately.

Audit your optionalDependencies and github: dependencies. None of these should be present in production lockfiles in normal cases. Set ignore-scripts=true for CI runners that do not need to build native modules.

If you installed any version of an affected package, rotate everything reachable from the install host: GitHub, npm, AWS/GCP, Kubernetes, Vault, and SSH. The payload runs at npm install time and harvests broadly.

The structural problem

The chain TanStack walked through is not novel. Every link is documented in public research, the postmortem cites: GitHub Security Lab’s 2021 Pwn Request writeup, Adnan Khan’s 2024 cache poisoning paper. The attacker reused the published code, complete with attribution comments, and chained these techniques into a single attack.

That’s the lesson worth taking. The defensive tooling we have today — SLSA provenance, OIDC trusted publishing, 2FA, branch protection — is built around the threat model of stolen credentials and direct repository compromise. Those defenses work. The attacker did not try to break them. They built a chain that lets a legitimate workflow do the malicious work, using each defense’s correctness as part of the attack.

Until GitHub Actions provides a stronger isolation boundary between cache-restoring jobs and publishing jobs — and until OIDC tokens are scoped to specific workflow steps rather than entire workflow runs — the burden falls on every individual workflow author to manually reason about trust boundaries that the platform does not enforce. That is not a model that scales across the open-source ecosystem.

The next compromise might look different in detail but identical in shape: legitimate publish pipeline, legitimate trust binding, attacker code running inside the bounds of the workflow run, no credentials ever stolen in the classical sense.

What's next?

When you're ready to take the next step in securing your software supply chain, here are 3 ways Endor Labs can help: