Security flaws in software are a fact of life.

While proper development practices can reduce their frequency and severity, the industry’s reliance on open source software for pre-built, reusable functionality makes vulnerabilities (and sometimes even malicious backdoors) a fact of life.

Like any source of risk, the key is to manage them cost-effectively rather than attempting to eliminate them completely. Resources - especially when it comes to development teams - are always limited. So focusing on the biggest issues first is crucial to delivering products but still doing so securely.

5 Key Methods for Evaluating SCA Results

In this post we’ll go into the five key methods for evaluating known vulnerabilities in software and provide you with the tools to make smart prioritization decisions:

- Common Vulnerability Scoring System (CVSS)

- Known Exploited Vulnerabilities (KEV)

- Stakeholder-Specific Vulnerability Categorization (SSVC)

- Exploit Prediction Scoring System (EPSS)

- Reachability Analysis

CVSS: An (Outdated?) Industry Standard

The Common Vulnerability Scoring System (CVSS) has been a cybersecurity staple since version 1.0 released in 2005.

But it’s showing its age.

Maintained by the Forum of Incident Response and Security Teams (FIRST), CVSS is a standardized scoring rubric for software vulnerabilities. And the ability to describe them in a consistent manner - irrespective of vendor or system - did offer some advantages over the relative anarchy that existed prior to its introduction.

CVSS scores range from 0 to 10, with higher numbers representing more severe issues. The standard also maps these numerical ratings to qualitative descriptions:

- Low: 0.1 - 3.9

- Medium: 4.0 - 6.9

- High: 7.0 - 8.9

- Critical 9.0 - 10.0

Perhaps most importantly, the National Institute of Standards and Technology (NIST) National Vulnerability Database (NVD) publicly lists the CVSS score for approximately 200,000 known Common Vulnerability and Exposures (CVE).

Unfortunately, CVSS has many limitations:

- At a conceptual level, because of its roughly 1.0-10.0 scale, the standard implies the worst vulnerability is only about ten times worse than the least severe one. From real-world events, this is clearly not the case. For example, the (Not)Petya ransomware targeted one vulnerability (CVE-2017-0144) and caused ~$10 billion in damage, while most known vulnerabilities never result in a single dollar of loss. Vulnerabilities follow a power-law distribution whereby a very small number are incredibly consequential while most pose little risk. The CVSS does not reflect this reality.

- Even worse, the majority (~58% as of this writing) of CVSS-rated vulnerabilities in the NVD are rated either “high” or “critical,” making it extremely difficult to determine which of these is actually the most important. Especially since many information security teams use the arbitrary threshold of 7.0 when deciding whether to remediate issues, the fact that most of them exceed it makes prioritization difficult.

- There is no way to compare groups of vulnerabilities with each other using the CVSS’ qualitative system. It is impossible to say whether 10 “critical” vulnerabilities are less or more concerning than 100 “high” ones. While the CVSS appears to use quantitative measures, upon inspection the basis for this is simply transforming qualitative descriptions (such as “low” confidentiality impact) to numbers.

- While the standard was amended in 2019 to state that “CVSS Measures Severity, not Risk,” the fact that it comprises both exploitability and impact components implies otherwise. As a result, many practitioners are confused by this messaging and nonetheless use CVSS as the key metric for vulnerability risk decisions.

Version 4.0 of the standard, which is in public preview as of this post’s release, acknowledges some of the problems associated with prior versions, but unfortunately still retains many of them. Although it changes some of the standard’s nomenclature and allows for more granular analysis of the safety impacts, cost of recovery, and prevalence of a given vulnerability, version 4.0 does not appear to address the core critiques of the system.

With that said, due to the widespread use of the CVSS, it is unlikely it will go away soon. Understanding its limitations and how to use it correctly is key for information security practitioners.

KEV: A Catalog of Known Exploits

In part because of the massive quantities of “high and critical” vulnerabilities security teams must deal with, the Department of Homeland Security's Cybersecurity and Infrastructure Security Agency (CISA) created a new category: Known Exploited Vulnerabilities (KEV).

According to CISA, the criteria for inclusion in its KEV catalog are:

- The vulnerability has an assigned Common Vulnerabilities and Exposures (CVE) ID.

- There is reliable evidence that the vulnerability has been actively exploited in the wild.

- There is a clear remediation action for the vulnerability, such as a vendor-provided update.

CISA somewhat confusingly writes that “the main criteria…is whether the vulnerability has been exploited or is under active exploitation” but then goes on to say in “reference to the KEV catalog, active exploitation and exploited are synonymous.” Some understandably - but mistakenly - view the KEV catalog as a continuously-updated register of CVEs undergoing active exploitation. In fact, it is simply a historical record.

The focus on real-world exploitation data makes the KEV an additional useful source of data on top of the CVSS. But this approach also has problems such as:

Binary output —The KEV is basically just a list of CVEs meeting its criteria and provides little additional information. While a vulnerability may have been known to have been exploited one time, it is possible it may have never been subsequently exploited due to easily-applied compensating controls such as firewall rules, etc.

Vague data sources — CISA describes KEV catalog data sources as “security vendors, researchers, and partners. CISA also obtains exploitation information through U.S. Government and international partners, via open-source research performed by CISA analysts, and through third-party subscription services.” This lack of transparency is suspicious for several reasons:

- In the government’s words, “CISA maintains the authoritative source of vulnerabilities that have been exploited in the wild.” As of this writing, the KEV catalog had 977 entries. This is substantially fewer entries than are tracked by other organizations (the Exploit Prediction Scoring System - discussed below - has 12,243 of these records). So “authoritative” seems like a stretch.

- Updates to the KEV can happen unpredictably. For example, in May of 2023, CISA added CVE-2004-1464 to the KEV catalog, citing “evidence of active exploitation.” Oddly, the vendor of the impact product had already noted such evidence 19 years earlier, making it unclear why CISA waited so long to add this issue.

Because the U.S. government maintains the KEV catalog, it represents a common reference point for both America and the rest of the world. Combined with the fact that it provides an additional layer of intelligence to drive vulnerability remediation, security teams should nonetheless understand how it works while being aware of its limitations.

SSVC: A Decision Tree Tool

Another approach seeking to address the flaws of the CVSS is the Stakeholder-Specific Vulnerability Categorization (SSVC)...not coincidentally the reverse abbreviation. Pioneered by Carnegie Mellon University's Software Engineering Institute (SEI) and formally endorsed by CISA in 2022, SSVC provides a structured decision tree tool for determining how to evaluate and respond to vulnerabilities.

Some challenges with the SSVC approach include vagueness, subjectivity, and difficulty with automation.

Vagueness — According to the CISA version, there are four potential categorizations, none of which provide concrete guidance:

- Track: The vulnerability does not require action at this time.

- Track*: CISA recommends remediating Track* vulnerabilities within standard update timelines.

- Attend: CISA recommends remediating Attend vulnerabilities sooner than standard update timelines.

- Act: CISA recommends remediating Act vulnerabilities as soon as possible.

Subjectivity — Because the SSVC is a decision tree, it requires judgment calls about things like whether an attack would have “partial” or “total” mission impact or whether the resulting damage is “low,” “medium,” or “high.” Different people might reasonably come to different conclusions regarding any of these decision points.

Difficulty with Automation — As making decisions about how to classify vulnerabilities will generally require human analysis (although advances in generative artificial intelligence might make this unnecessary), it can be challenging for an organization to apply the SSVC to thousands or even millions of known issues in its network.

Tailoring SSVC

Tailoring the SSVC to your organization and developing more concrete decision points could potentially make it a useful tool. For example, these criteria would benefit from more specificity:

- Technical Impact Decision Values (by default “Partial” and “Total” are the only options)

- Mission Prevalence Decision Values (“minimal,” “support,” and “essential” could benefit from financial or other values being associated with them)

- Public Well-Being Impact Decision Values (“minimal,” “material,” and “irreversible” are defined by CISA but still cover a huge range out outcomes

Without these, however, using the SSVC could result in similar problems as have plagued the CVSS.

EPSS: A Forward-Looking Prioritization Approach

The Exploit Prediction Scoring System (EPSS), published by FIRST in 2019, is another - and very promising - approach to vulnerability prioritization.

The latest version of the EPSS algorithm, v 3.0, analyzed over 6 million observed exploitation attempts of known vulnerabilities. It incorporates data from threat intelligence providers, CISA's KEV catalog, vulnerability characteristics, and other inputs. Its output is a number from 0 to 1 for every published CVE, indicating the likelihood of exploitation in the next 30 days. The score updates daily as new data emerges.

The results are impressive, to say the least. Using a traditional “fix all high and criticals” (per CVSS) strategy, you would need to patch the majority of known issues (because most are high are critical). And in doing so, you will fix ~82% of CVEs ever exploited (per the EPSS, not KEV data set).

Compare that approach to using the EPSS v3 with a threshold score of 0.088 (remediating all issues that score higher than this rating). To achieve roughly the same outcome with EPSS, you will only need to resolve 7.3% of all known CVEs.

This is only ~12.5% of the fraction necessary when using CVSS!

Drawbacks of the EPSS include:

CVE-Centric — EPSS has an inability to provide scores for anything but published CVEs. Due to the need to compare “apples-to-apples,” FIRST only uses a given set of known vulnerabilities. Not having a publicly available and inspectable model or data set. The EPSS algorithm is not open-source and the data set used to drive its decision-making is not public because some of its data partners are commercial vendors who do not want to release their intellectual property.

Not Customizable— EPSS can't take into account characteristics of a given environment such as compensating controls or code dependencies, when determining exploitability.

Lack of Impact Information — Having no way to describe the business or mission impact of a successful attack, either more generally or in the context of a given system. While EPSS is very good at determining the likelihood of a given vulnerability being exploited, it cannot tell you much about what would happen if this occurred. Unlike the CVSS or SSVC, the EPSS does not even provide a way to describe the damage of such an attack and would require combination with another approach (such as the FAIR methodology) to do so.

While the EPSS is still an emerging standard, it can greatly assist security teams as they seek to deal with huge numbers of vulnerability findings.

Reachability Analysis: A Next-Level Prioritization Method

Reachability analysis takes vulnerability prioritization to the next level. By using call graphs to show relationships between software functions, you can understand open source library vulnerabilities in their real-world context, i.e. in a given downstream application.

By linking call graphs across dependencies, you can trace forward through your application code to see if an attacker could potentially access a given software flaw. Tracing backwards shows what code calls a given function, allowing you to assess operational impacts of changes.

This answers questions like:

- Is my code actually invoking this library and the vulnerable code within it?

- What parts of my codebase would be affected if we remove or update this dependency?

The precision of call graph-based analysis lets you to smoke out those vulnerability scanner findings representing issues posing minimal risk in your product or network. It also enables you to evaluate the safety of library upgrades and the elimination of unnecessary dependencies.

The primary challenges with reachability analysis are:

- Ensuring the accuracy of the call graphs themselves. You’ll need a trusted tool to ensure your analysis is correct.

- Interpreting and communicating the findings of the analysis. While standardized methods of described reachability analysis findings are still evolving, approaches like the Vulnerability eXploitability Exchange (VEX) can be especially helpful here.

- A requirement to be able to access both first-party (i.e. proprietary vendor) and third-party (i.e. open source) code to build the call graph. With certain products, it may not be feasible or contractually allowable to inspect proprietary code.

- Especially when combined with other effective forms of vulnerability prioritization, though, reachability analysis is the way to go if you want the clearest risk picture.

Conclusion

Information security teams often need to sort through a huge amount of data. Parsing the signal from the noise is incredibly challenging but equally as important.

While the CVSS initiated an era of standardized vulnerability analysis, it has proven relatively ineffective in driving informed risk decisions. KEV essentially adds a new category to the CVSS, allowing for more granular analysis but still leaving much to be desired. SSVC is an interesting approach but will be challenging to adopt at scale. The EPSS is promising and can give a rapid snapshot of any given CVE’s likelihood of exploitation. If you want the most accurate security picture possible, though, you are going to need reachability analysis.

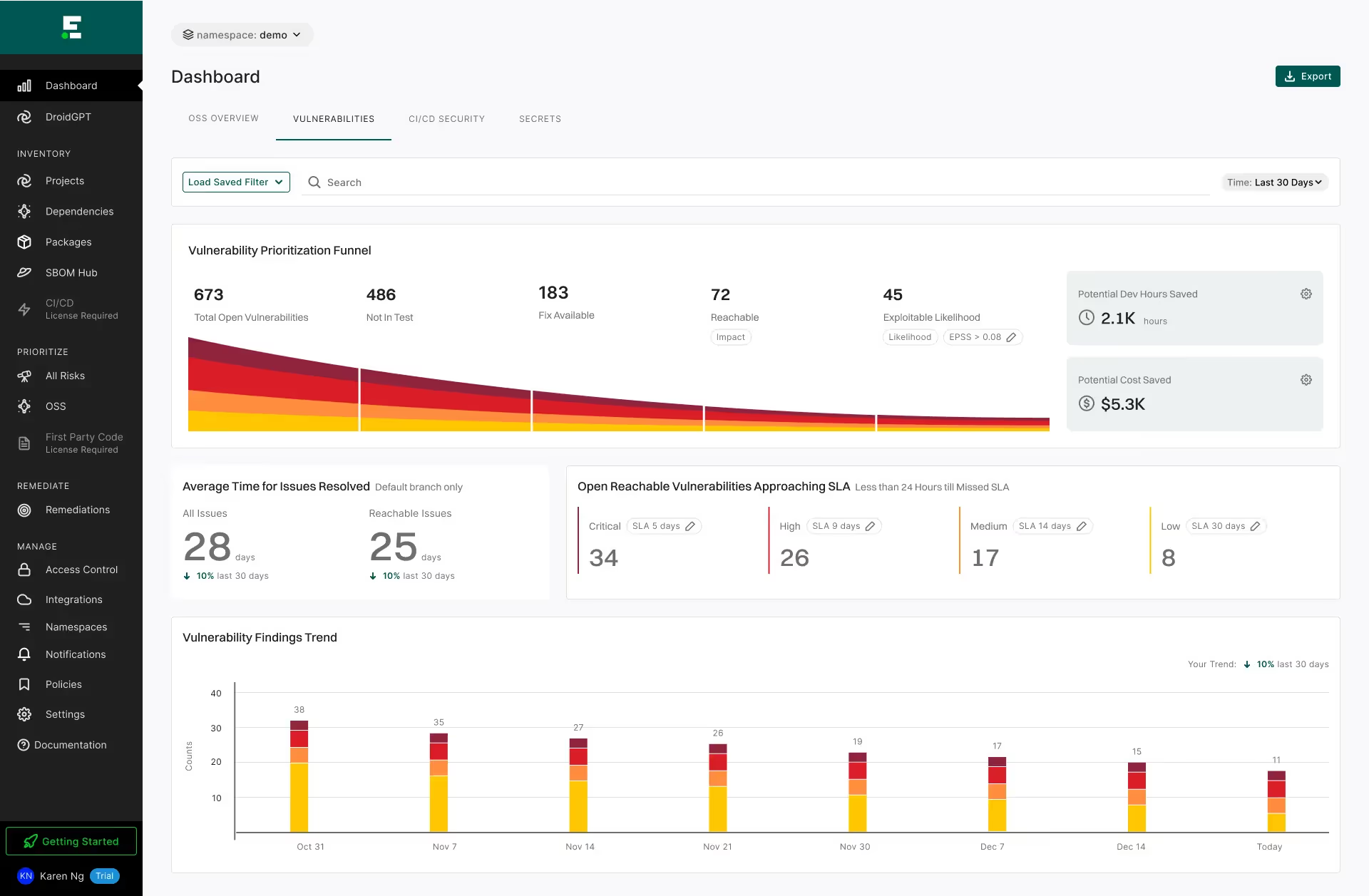

At Endor Labs, we've seen our customers be most successful when combining some of these technologies with some questions specific to their environment - and notice CVSS isn't in this list!

- Is the function containing the vulnerability “reachable”?

- Is there a fix available?

- Is it in production code (not test code)?

- Is there a high probability of exploitation (high EPSS)?

- Is the risk of performing the upgrade lower than the security risk it repairs?

In the following example, you can see 94% reduction in the number of findings:

Reachability with Endor Labs

Interested in learning more about how Endor Labs does it? Watch this short video to see reachability-based SCA in action.

To get started with Endor Labs, start a free 30-day trial or contact us to discuss your use cases.

What's next?

When you're ready to take the next step in securing your software supply chain, here are 3 ways Endor Labs can help: