Anthropic announced Claude Code Security last week, a new capability that scans codebases for vulnerabilities and suggests patches for human review. It's currently a limited research preview with a sign-up form for Enterprise and Team customers.

It’s not the first research preview we’ve seen from the frontier labs for code security. In October 2025, Google announced a research preview of CodeMender, and OpenAI announced a research preview of Aardvark. There are still many unanswered questions from these marketing announcements about the efficacy of these research previews: accuracy, cost efficiency, scale, and investment size, to name a few. Without benchmarks and evidence, it’s hard to judge if they work or not.

But this is still a big deal. Not because of the products themselves, but because of what it signals.

When the world's leading AI companies decide that application security deserves dedicated products, it confirms something we've been saying for years: AppSec is the most critical frontier in cybersecurity.

The threat landscape is accelerating in both directions

Google's 2026 Cybersecurity Forecast paints a stark picture. Software supply chains and AI-generated code are both under siege from multiple directions at once.

On the supply chain side, the report highlights escalating compromises: China-nexus actors rapidly exploiting the React2Shell vulnerability, ransomware groups exploiting managed file transfer software across hundreds of targets simultaneously, and North Korean threat actors combining supply chain attacks with digital asset theft. These aren't hypothetical risks. They're active campaigns targeting the dependencies and packages that every application relies on.

The attack surface is expanding on two fronts at once: the code being written is less secure, and the attacks targeting that code are more sophisticated. That's a compounding problem, and it raises an obvious question. Can the same AI coding agents contributing to this problem also solve it?

Coding agents have a foundational security problem

The short answer: not yet. And the data is clear on this.

If AI coding agents could handle security well, the code they generate would already be secure. It's not. Researchers at Carnegie Mellon University, Columbia University, Johns Hopkins University, and other institutions built a benchmark of 200 real-world coding tasks designed to test whether AI agents write secure code. The results are stark: while 61% of solutions by Claude 4 Sonnet were functionally correct, only 10.5% were both functionally correct and secure.

This isn't a fringe finding. It's consistent with a growing body of research showing that AI models introduce vulnerabilities at a faster pace than human developers. Fundamentally, it’s because AI coding agents don’t know what “secure” looks like in the context of a specific application.

Code Rabbit, an AI code review startup, came to a similar conclusion in their 2025 State of AI vs Human Code Generation Report. They found that AI-generated pull requests (PRs) contained 1.7x more issues and 1.4x more critical security issues than human pull requests. In particular, AI made 2.74x more cross-site scripting (XSS) and 1.91x more insecure object reference flaws than humans—dangerous security flaws that teams need to catch.

So why does AI make these mistakes?

The problem begins with how AI models are trained. General-purpose LLMs are trained on open source code on the internet. This is unlabeled data, so they learn the good with the bad, which in this case means code vulnerabilities. Coming from the open source security space, it’s a problem we’re intimately familiar with at Endor Labs.

Vulnerabilities (CVEs) in open source projects have increased by 490% over the last decade. Last year alone saw more than 48,000 CVEs published. AI models aren’t trained in a way that helps them distinguish between safe and unsafe code patterns. In fact, studies have shown that AI code gets less secure with each prompt, even when developers specifically ask for secure code/outputs.

But even with the right tools, there's a deeper problem with relying on AI alone for security.

Security needs determinism and evidence

Security tools need determinism. When you run a security scan, you need reliability and consistency in the findings. The same code should produce the same results every time. Your security team, and customers, need to trust that a clean scan means clean code, and that a flagged vulnerability is a real one. This is the foundation of auditable systems.

AI models are probabilistic by nature. They don't offer that guarantee. You can run the same prompt twice and get different results. That's fine for code generation, where a developer reviews the output. It's not fine for security, where a missed vulnerability could mean a breach, and a false positive could mean an engineer wastes hours chasing ghosts.

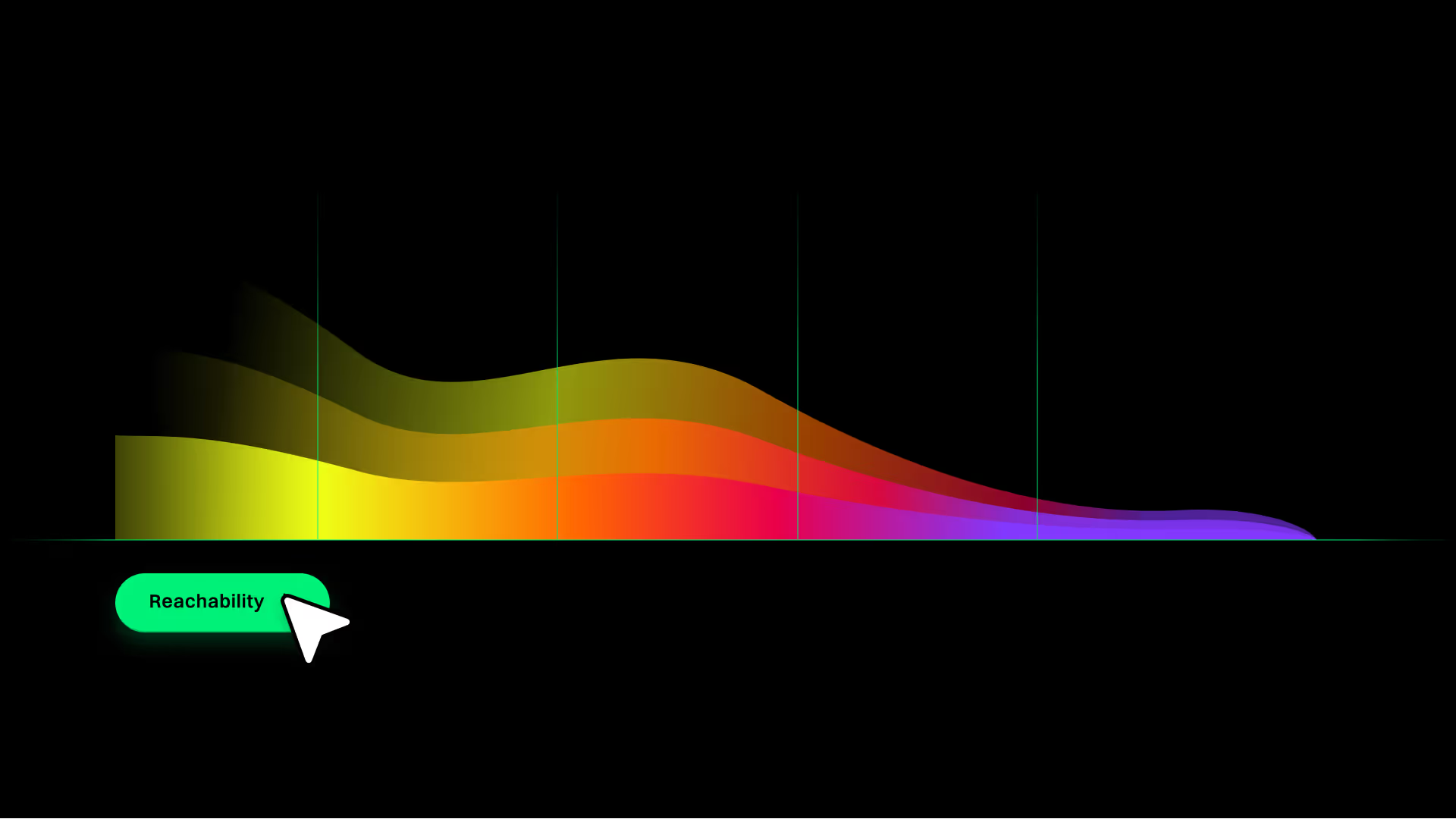

This doesn't mean AI has no role in security — far from it. But it means you need deep code and security context layered underneath the AI to provide the deterministic foundation so you can take advantage of agentic reasoning on top of it. For code, that means you need to understand reachability, data flows, code relationships, and application architecture.

The same holds for the software supply chain. When a malicious package targets your build pipeline, you can't rely on probabilistic detection. You need deterministic analysis of package behavior, provenance, and composition — the kind of evidence that lets a security team act with confidence rather than chase alerts.

Determinism and evidence give security teams the foundation to act, and the ability to audit and report if a risk was fixed. But there's another, more urgent, structural issue.

Security demands independent verification

If your coding agent writes the code and tells you the code is secure, who's verifying that claim? It's the same model. The same context window. The same blind spots.

Customers will need independent capabilities to verify the outputs of their coding agents. This is a structural reality of how security works. You don't let the developer who wrote the code also sign off on the security review. The same principle applies to AI.

When an organization adopts Claude Code, Cursor, GitHub Copilot, OpenAI Codex, or any other AI coding tool, they need a security layer that works across all of them,one that brings its own deep understanding of the codebase, the dependency graph, and the threat landscape. That's a fundamentally different product from a feature bundled into a coding assistant.

Security teams need products, not features

Enterprise security teams don't adopt features. They adopt products with workflows that map to how they operate, customizable policies that reflect their risk tolerance, verifiable results they can present to auditors and leadership, and integrations into the CI/CD pipelines, ticketing systems, and reporting dashboards they already use.

Security teams need to triage findings across hundreds of repositories and prioritize by business impact. They need audit trails and compliance reporting. They need SLAs, role-based access, and the ability to define what "secure" means for their organization, not accept a one-size-fits-all model output.

At the end of the day, all these research previews from the foundational labs are just more scanning and detection tools without the rest of the policy, governance, and reporting systems that enterprises require. They may likely one day be valuable tools for developers, but good security has never been about detecting issues or even fixing them. It’s always been about managing the balance between speed and risk.

It's the difference between a demo that impresses and a product that ships. And it's the work we've been doing, not because we predicted any specific announcement, but because we understood the trajectory. When AI agents started writing production code at scale, it was clear that security would need to evolve from reactive scanning into an intelligent, integrated layer across the entire software development lifecycle.

We've seen this movie before

Every time a hyperscaler enters a specialized market, the same narrative plays out: the incumbents are done, the category is commoditized, the focused players can't compete with the platform. And every time, the opposite happens.

Every major cloud provider has built native monitoring and observability into their platforms — AWS CloudWatch, Google Cloud Operations, Azure Monitor. Each one was supposed to eliminate the need for standalone observability tools. Yet, Datadog built a purpose-built observability platform with deep integrations across every cloud, every stack, every workflow that operations teams actually use. Today Datadog is worth over $40 billion. The bundled features didn't go away — they just became table stakes that enterprises outgrew.

When AWS, Azure, and GCP each launched native cloud security posture management tools, the assumption was that no startup could compete with the cloud provider's own view of its infrastructure. Wiz built an agentless, cross-cloud security platform that works across all of them - giving security teams a unified view that no single cloud provider could offer. Wiz was acquired by Google for $32 billion, the largest cybersecurity acquisition in history. The cloud providers validated the problem. The focused company built the product that actually solved it.

The opportunity ahead

We're excited about the direction the industry is heading. Anthropic, Google, OpenAI, and others are doing meaningful work to raise the profile of application security and bring more engineering talent to the problem. The more attention this space gets, the better, because the problem is massive and growing. Every improvement in code security is a win for our entire industry, whether that happens at the model, agent, or security layer.

But the principles are clear. Production security requires deterministic foundations, not probabilistic outputs. It requires independent verification, not self-assessment by the same tool that wrote the code. It requires products built for security teams, not features bundled into coding assistants. And it requires deep, proprietary security intelligence that general-purpose models don't have access to.

The best outcomes for the industry won't come from foundation model companies or security vendors working in isolation. They'll come from the technical breakthroughs of companies like Anthropic, Google, and OpenAI matched with innovation from security-focused frontier labs and ISVs..

Something big is coming

Everything we've outlined in this post - determinism, independence, proprietary intelligence, product-grade workflows isn't a pipe dream. It's what we've been building at Endor Labs. A purpose-built security platform for the agentic era, designed from the ground up to do what no coding assistant or frontier model can do alone.

Look out for a new announcement from us, related to this, on March 3rd.

What's next?

When you're ready to take the next step in securing your software supply chain, here are 3 ways Endor Labs can help: